The Dream Machine: A Story of Creativity, Tinkering, and Cross-Pollination

“Creativity is a function of freedom” – George Pake

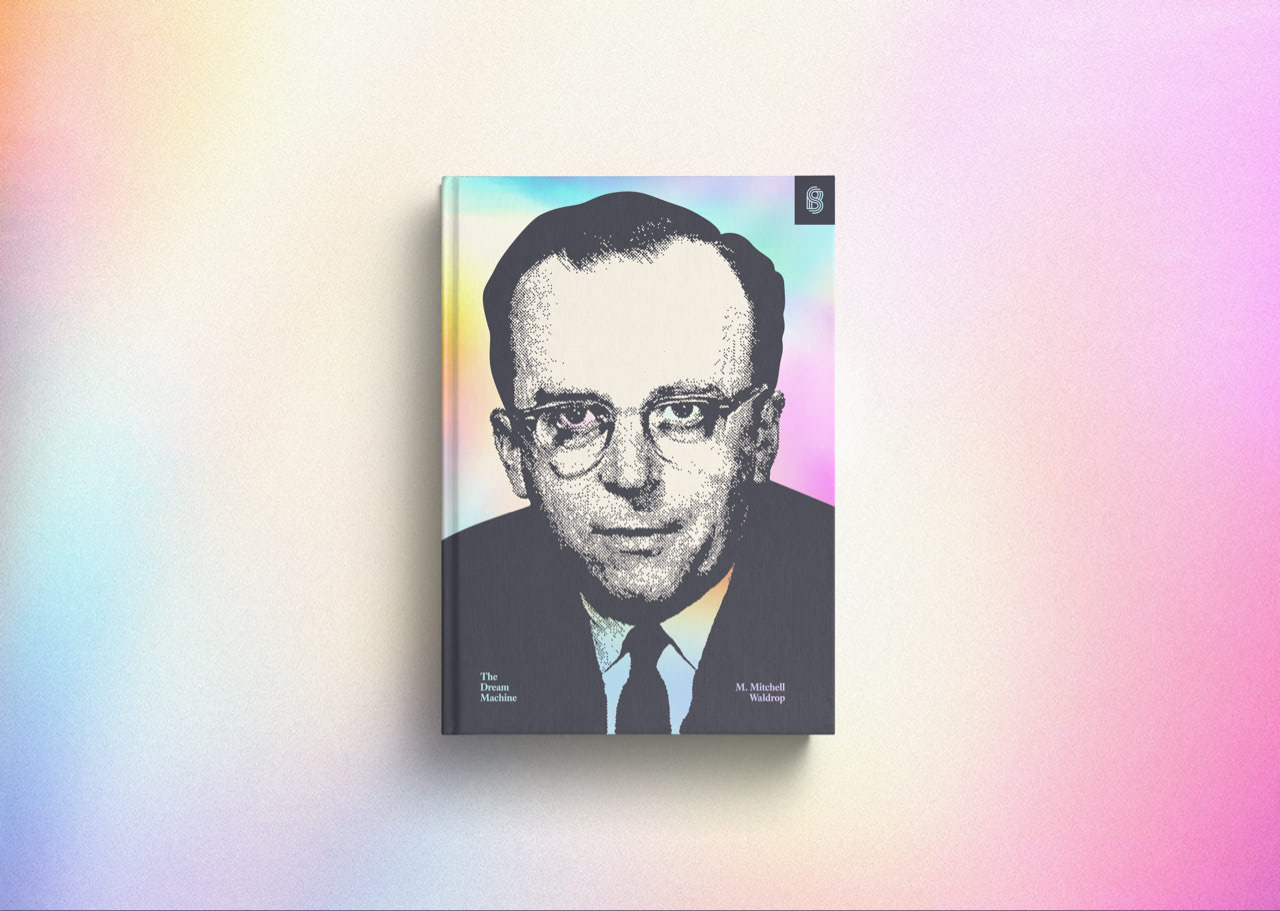

The advent of computers and the internet is a story of human creativity and organizations that brought myriad dreams to come to life. From the 1950s through the end of the 1970s, during which a scientific community tinkered with and established fundamental ideas in computing, and up through the explosion of personal computers and early internet in the subsequent decades, the story is rife with characters and environments that allowed creativity to flourish, along with those that undermined it. The Dream Machine tells this story by following the life of J.C.R. Licklider, a central figure and instigator of much of the creative accomplishment by virtue of his personality and career.

Lick, as he insisted on being called, was perennially playful. He tinkered with computational devices and early predecessor computers in his own time. Upon learning how to program a Librascope LGP-30 in 1958 — a woefully inadequate, unreliable machine — Lick spent hours on end with it, refining his intuition for how humans could interact with computers. The following year, he got his hands on a DEC PDP-1. It was a monumentally better machine, and he ecstatically spent long evenings sketching out ideas in code, interacting with the computer. He was often not doing serious work — a coworker remarked that Lick would not even save his programs after spending all night on them — but he was happily at play.

The line between “real” work and “merely” playing with new technology was often blurry throughout the advent of modern computing. For example, the popularity of email began with a demo of nascent networking technology: In the mid-1960s, ARPA (the Advanced Research Projects Agency within the Department of Defense) started working on a project to develop computer networking, eventually resulting in Arpanet (a predecessor to the modern internet). Larry Roberts, who led the networking project, wanted to demonstrate their progress to higher-ups, and so he nudged Steve Lukasik (ARPA’s director) to start using the network. Lukasik asked what he could do on Arpanet; Roberts replied “e-mail”. In short order, Lukasik began playing with the newly-invented technology from a Teletype terminal and in short order found it indispensable, despite a lack of tools for organizing email. In fact, Lukasik complained to Roberts that he was getting a flood of messages which were “just accumulat[ing]”1. Nevertheless, Lukasik insisted to the rest of ARPA that “e-mail” would be the only way of communicating with him, turning the technology from a novel toy to a serious business utility.

Lick’s penchant for playing with technology likewise guided him throughout in his career. His son described him as a monkey, “always reaching out for that next piece of fruit”. In an era where workers sought stability and prided themselves on spending decades at the same company, Lick’s fruitarianism took him through at least eight distinct roles across his career (after majoring in three subjects as an undergraduate and eventually completing a PH.D. in a fourth). Across multiple leadership opportunities in those roles, from co-director of a radar-display development group at MIT and later as the de facto leader of the nationwide computer research community, Lick’s emergent leadership style was characterized in part by making sure there was room for play and having fun. He encouraged investigators to make their own mistakes and find their own way; imaginative ideas were always welcome. Colleagues remarked that Lick was “surrounded by an atmosphere of ideas and excitement”. At the same time, Lick had no patience for laziness and sloppy thinking, insisting that his students properly master their tools for theoretical analysis or experimental technique. This “rigorous laissez-faire” approach didn’t work for everyone, but “self-starters who had a clear sense of where they were going” thrived.

It was all in the pursuit of discovering and refining ideas. Lick collected them, toyed with them, and cross-pollinated them. While other professionals were keen to dismiss new ideas as “crazy”, Lick was eager to explore the possibilities and dig in. He loved debating with colleagues, challenging assumptions, and “constantly pushing notions as far as they could go.” Lick integrated ideas from different people and disciplines to paint a compelling roadmap for future projects, invigorating similarly-inquisitive people along the way and getting them involved in dreaming up the future. Notably, a generation later, one of Lick’s successors and contemporaries would go on to lead a team at Xerox PARC, where he did his best to seed Lick’s ideas into the researchers’ collective discourse.

Many of the fundamental ideas developed in the 1970s that still underlie modern computing came out of ideas cross-pollinated across various projects and industries.

- Alan Kay, who would go on to invent the preeminent software development paradigm and the graphical-overlapping-windows interface still used on modern computers, was a precocious child of an artist/musician mother and biomedical engineer father. Having read hundreds of books (“full of many kinds of ideas and ways to express them”) by the time he started school, he was perennially bored. He worked as a guitar teacher for a while before becoming a programmer for the Air Force and subsequently at the University of Utah; across both latter jobs he observed a kernel of an idea that he would further develop when we arrived at PARC in the 1970s. This resulted in Smalltalk, the first “object-oriented” programming language that encouraged programmers to divide a computer into thousands of “little computers” each opaquely doing a piece of useful work. This idea would find its way into almost every programming language that has been designed since. Furthermore, inspired by a revelation brought on by Douglas Englebart’s experiments (famously demoed in 1968) and the progress of Moore’s Law, Kay went on to design and build the first “personal” computers.

- Bob Taylor, the associate director of the Computer Science Lab at PARC where Alan Kay and others invented the future, planted seeds from ideas he had been exposed to at ARPA, notably including work from Vannevar Bush, Licklider, Douglas Englebart. He would constantly be foisting Lick’s papers onto the researchers at PARC, and over time the “self-exciting” researchers would gravitate towards the most resonant ideas and incorporate them into the products they were designing.

- Chuck Thacker, who worked on the graphical user interface for the Alto, had previously worked with a new memory technology that was far cheaper than the magnetic-core memory that was prevalent at the time. That familiarity allowed him to realize that bitmapped displays (where the screen was made of multiple dots, each mapped to a particular memory address indicating whether that dot was on or off) would become cost-effective and a feasible interface for the Alto. Furthermore, his familiarity with time-sharing systems led him to a further realization (that communication with peripherals could be handled in the software rather than with dedicated hardware, an idea inspired by time-sharing) that led to a dramatic simplification of the hardware needed to implement a computer.

- Seymour Papert, a psychologist at MIT, rejected the notion of education as a process of pouring knowledge into an empty vessel. He insisted that true learning “required the active participation of learner”, driven by curiosity, exploration, and the joy of making new discoveries and mental connections. Alan Kay and others carried this vision forwards, believing that computers could enable that active participation. They believed that computers could bring true learning to everyone.

- George Pake, director of PARC, knew from his former career as a physicist that investing whatever amount necessary on cutting-edge equipment often led to breakthrough discoveries; he readily advocated and fought for the lavish budget spent on the Alto.

In fact, the lavish budget available to all the PARC researchers was part of a strategy to collect the best people and let them operate unconstrained. PARC executives wanted to recruit the best people, and doing so required a few preconditions. First, the research center had to be located near a concentration of existing cutting-edge companies along with easy access to a major research university. Second, they had to find the best people2 who would foster an environment of intellectual excitement, one where people would have real challenges that would excite them and make them feel like they were working on the real action. A lack of directly-relevant experience was not a problem as long as they were thinking the right way. Third, they had to provide lavish resources without skimping. Pake recognized that his job, as director of PARC, was to keep the profits-and-spreadsheets management at bay; they were told to expect results in 5–10 years, not anytime soon. This was a foreign concept for many of Xerox’s managers and executives and keeping corporate meddling away was difficult and contentious at times, but Pake was mostly successful at doing so.

Once a contingent of innovators was assembled, almost every instance of significant progress came from close collaboration. Lick’s first stint at ARPA saw him stitch together a collaborative effort spanning existing agencies that were already working on computer research, turning what could’ve been a minefield of infighting into a collective advancing a common cause. Assembling this community was also Lick’s way to keep the spirit of interactive computing alive. If he wanted his vision to outlast his tenure at ARPA, he had to forge a community that was self-reinforcing and self-sustaining. There is little doubt that he succeeded in this. His patchwork of organizations was echoed decades later in the late 1980s, when people at ARPA, NASA, NSF, and the Energy Department created an informal organization to connect federal government agencies and universities to the nascent internet and upgrade its capacity to keep up with exploding bandwidth demands. These agencies found it cheaper and easier to cooperate rather than fight since they all needed this technology. If any one of them came up with a good idea, they would collectively find funding for it in one of their budgets. And while official Congressional approval for their projects kept getting delayed, the officials in charge of networking at these organizations agreed to draft their budgets as if they already had support.

Some of the best collaboration happened when people were literally close to each other. A conference of graduate school students in 1968 brought together a coterie of people, many of whom would go on to become pioneers of modern computing. Alan Kay presented his vision of the “Dynabook”, a description of which could apply, virtually unmodified, to today’s laptops or iPads. Steve Crocker, a graduate student at UCLA, kept in touch with several other attendees to discuss networking and networked-application protocols. For a while, they assumed that a group of professionals would eventually come by to tell them how networking was “supposed to be” done. But time went on, nothing of the sort happened, and they realized that a large part of the future of networking was up to them to formulate. Progress was painfully slow as the people involved were geographically-dispersed and trying to reconcile balkanized hardware and software. They eventually decided to gather in one place, hammer out implementation minutiae in person, and ended up resolving all their incompatibilities over the course of a week during October 1971.

Steve Crocker and others cared enough about the future that they wanted to make it happen. Despite feeling like they knew very little and that experts would inevitably swoop in to tell them they were doing things all wrong, they pushed forward. This sense of caring was a common thread through many of the people in this story.

- Bob Taylor, widely considered an administrative bench-warmer, didn’t want to succumb to his reputation and resolved to make his mark on computing history.

- Charles Herzfeld, ARPA’s director, believed strongly enough in ARPA’s computing projects that he continued to fund it despite depredations in service to the Vietnam war effort.

- Ken Thompson and Dennis Ritchie, who worked on the ill-fated Multics operating system, enjoyed the experience of interacting with it so much that they decided to build their own version after work hours. This became UNIX, which has influenced or underpinned every operating system in the five decades since its release.

Caring enough to take action implies a sense of agency regarding the future — progress towards a better future didn’t “just” happen; it wasn’t inevitable and care was needed to steer efforts appropriately. As Lick was writing a paper describing the future vision of the “Multinet”3 (as the Internet was known in 1979), he was afraid of a future where “the year 2000 is new sheet metal on a souped-up 1970s chassis.” This could happen, Lick wrote, because private companies and government departments develop siloed systems as a result of an inability to work together towards a nebulous, iffy, and far-off vision. The solution, Lick believed, was to expose as many “ordinary people” to networked computers as possible. Communities would then gather around all sorts of interests (a phenomenon that was already happening among the research labs that were able to connect to the network), providing the leadership (or at least internal pressure) needed to align these private companies and departments into creating an open and unencumbered network.

After all, the freedom provided by open systems was behind some of the greatest leaps in this story. Minicomputers freed teams from the tyranny of centralized mainframes run by anointed “white coats”; microcomputers furthered that trend, making computers accessible to everyone. Microcomputer makers including DEC and Altair led to the rise of a broad range of new companies who’d buy the raw computers, add components and software suited for specific functions, and resell them as a complete system. One of these companies, focused on selling working-out-of-the-box computers to consumers, was Apple Computer.

On the software side, UNIX was the first operating system whose source code was available and modifiable. As a result, volunteers ported it to all sorts of different computers. UNIX wasn’t the only serious option though; in April 1976, Gary Kildall released CP/M to great commercial success. CP/M was later cloned by a fledgling Microsoft and released as MS-DOS. Around this time, software applications started becoming a compelling selling point on their own. Businesses were buying computers like the Apple II specifically to run titles like VisiCalc, WordStar, and dBase. Lick served as an investor and advisor in Infocom, a video game developer that would grow to over $6 million in annual sales a few years later.

In stark contrast to the austere, monolithic mainframes of decades past, people found personal computers to be friendly, and even intimate: MIT sociologist Sherry Turkle noted that “…the computer is important not just for what it does but for how it makes you feel. It is described as a machine that lets you see yourself differently, as in control, as ‘smart enough to do science’, as more fully participant in the future.”

But the creative future enabled by personal computers happened in spite of detractors and missteps from the people stewarding its creation.

In the beginning, Lick’s group of psychologists at MIT in the early 1950s was misunderstood and spurned by John Burchard, dean of humanities at the time — to Burchard, psychology meant industrial relations and organizational behavior. He had no comprehension of the information theory, neural networks, and systematic analysis of the brain Lick’s group was working on. Every interaction Lick had with Burchard left Lick infuriated (a rare state for him), and Burchard’s miserly support eventually led Lick’s unofficial department to disband by mid-decade. The dissolution of his group left Lick deeply unhappy, draining the excitement from the rest of his work at MIT.

Burchard appeared to be dismissive of future potential and stuck with preconceived notions. He viewed the world through existing frameworks. Many other people did too:

- In the 1950s, computers were seen as impersonal oracles, and the notion of making them interactive and fun seemed strange. The vacuum tube technology of the time meant that performance scaled super-linearly with price; bigger machines provided more performance-per-dollar. Computer buyers therefore gravitated towards the largest, most serious hardware they could afford. The software was a mere footnote; many dismissed the act of programming as just “writing down a logical sequence of commands” after “real work” had already been done to figure out those commands. To scientists’ chagrin, it turned out to be very easy to make mistakes in the details and it was impossible to predict who the best programmers would be.

- In 1960, Douglas Englebart lamented that people would try to interpret his ideas in terms of the frameworks they were already familiar with, resulting in a muted appreciation for his novel (and “admittedly strange”) ideas.

- A few years later, as Larry Roberts was working on packet switching for his nascent networks, he learned that several other research groups had independently made the same discovery but had been stymied in their progress by corporate overlords who didn’t have the interest or competence to build it out. Luckily, Roberts was at ARPA, whose entire purpose was cutting through bureaucracy elsewhere, and so he was the one who got to implement “his” network.

Even at PARC, the engineers had grown up with time-sharing and that was the framework with which they designed their early projects. There was a general belief that doing computer research required a time-sharing system. They decided to build a system, entirely from scratch, that would be state-of-the-art. But when they were done, they realized that the best they could do was somewhat of a dead end. Time-sharing was built on a premise that computers were fast and humans were slow, but that turned out to only be true when humans were forced to work the way computers wanted to, with finicky inputs and austere outputs. When computers were tasked with improving the way people actually worked, complete with formatted content and graphics that allowed people to spot patterns, people turned out to be fast and computers slow. The PARC engineers converged upon a vision of personal computers with graphical displays and high-speed networking between them — a vision that Bob Taylor had been espousing since the start, but which had seemed foreign, even incomprehensible, a few years earlier.

While the PARC engineers developed futuristic office systems, they often clashed with the rest of Xerox. Middle managers at corporate HQ wanted nothing more than to ensure that their investment was going to yield results. The PARC researchers meanwhile stylized this to be bureaucratic oppression; they saw themselves as hippie martyrs doing their best to annoy or push back their corporate overlords. The researchers looked down upon visitors; when an executive in charge of Xerox’s next generations of word processors (little more than sophisticated typewriters at the time) visited, the PARC researchers refused to engage with him because he didn’t understand software and computers. In doing so, the PARC researchers lost an opportunity to influence Xerox’s imminently-shipping devices and acquire an internal customer for the technology they were developing. George Pake looked for opportunities to make amends, but even he had to admit that there was a large cultural gap between the creative environment at PARC and the utter lack thereof at Xerox HQ. Pake described the people at HQ as “nonintellectual” — they had no interest in new ideas, technologies, or the long-term thinking that was necessary for making that happen. Pake was mostly unsuccessful at convincing Xerox executives to focus the company’s efforts on computers4.

Despite their zeal for progress, the computer scientists at PARC carried additional notions of the computing experience that they later admitted held them back from commercial success. The Alto, PARC’s seminal project, was also meant to be a “real”, powerful computer. This was in contrast to the microcomputers that were everywhere by the late 1970s. Alan Kay was surprised at how popular microcomputers were among hobbyists who were ostensibly untrained and uncouth: “It had never occurred to us that people would buy crap.” The Apple II was one of the most popular microcomputers of its time, yet Chuck Thacker derisively discovered that its central processor was the same one they had used to control the Alto’s keyboard.

Nevertheless, it was the hobbyists who inexorably took PARC’s ideas and brought them into the world. Lick would’ve considered himself a hobbyist, relying on intuition and hunches to tie together the computing nascent computing world during a few decades of intense discovery and development progress. By the late 1970s, The PARC researchers had widely disseminated their research and became known to everyone who worked with computers. Their window-based UI was not a secret. Steve Jobs’ legendary visit in December 1979 introduced him and his team to the friendliness and interactivity that the Smalltalk programming environment enabled5. They would go on to propagate these ideas through Apple’s computers, first with the Lisa and later with the Macintosh in 1984. By the end of the decade, the prevalence of personal computers made it almost a necessity to inter-network them, a user-driven need that resulted in the development of the modern internet. This further enabled hobbyists, including vast numbers who’ve learned how to use and control computers through content discovered and published by others who had gone through similar experiences. The ability to use and control — program — computers had trickled out of research labs and small local communities, giving rise to large online communities and legions of modern-day hobbyists interacting with their computers and slowly discovering what they can really do.

-

Roberts quickly assembled a program to categorize, retrieve and delete messages, thereby improving the ergonomics of using e-mail based on user feedback.↩

-

Bob Taylor remarked: “Never hire ‘good’ people, because ten good people together can’t do what a single great one can do”↩

-

“Computers and Government”, published in the essay compendium The Computer Age: A Twenty-Year View in 1979.↩

-

Bob Taylor later indicated that he believed Pake wasn’t able to make inroads at HQ because Pake only saw a collection of gadgets without understanding the vision of the whole system himself.↩

-

The smiling Finder icon on modern macs is a vestigial remnant of this friendliness.↩